Trade traditional delivery models for Velocity-as-a-Service

By Alejandra Renteria

95% of enterprise AI projects fail—CodeRoad’s Velocity-as-a-Service restores and redefines momentum by aligning talent, systems, and execution around measurable business ROI.

Why traditional delivery models fail AI projects (and what actually works)

Key takeaways

- AI Failure is a System Failure: 95% of enterprise AI pilots fail to deliver measurable ROI—not because the technology doesn't work, but because traditional delivery models treat AI as an augmentation problem rather than an integration challenge.

- The Coordination Tax: The three most common failure patterns are chasing technology without business alignment, skipping the discovery phase to start coding immediately, and deploying AI on data infrastructure that was never built for it.

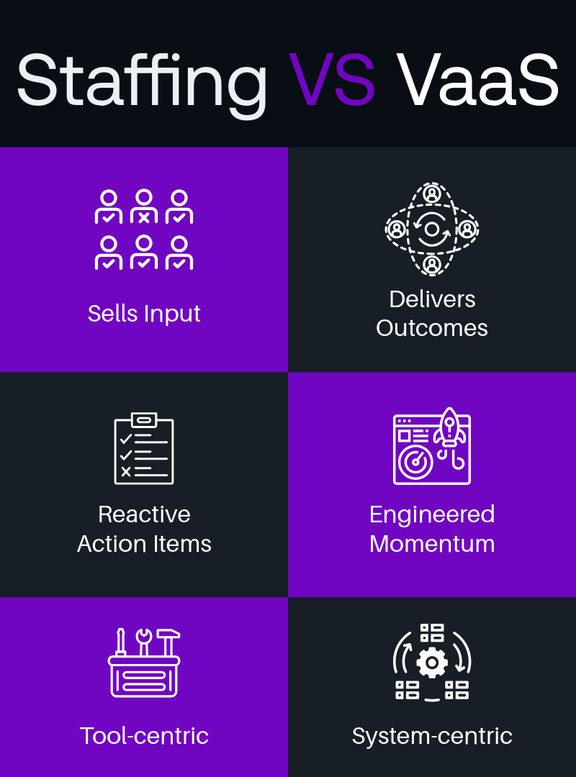

- More talent doesn’t solve everything: Staff augmentation alone won't solve AI delivery challenges. More developers without a unified system means slower delivery, fractured alignment, and teams working on disconnected pieces rather than driving toward measurable outcomes.

- Velocity Requires Preparation: High-performing organizations are three times more likely to redesign workflows rather than bolt AI onto existing processes. They start with business outcomes and work backward to solutions—which sometimes aren't AI at all.

- From Staffing to Throughput: Velocity-as-a-Service offers an outcome-based alternative: aligning elite talent, AI-powered delivery, and digital transformation expertise into a single engine that turns vision into measurable business impact.

Your company just spent six months on an AI pilot. The demos looked great. The team was excited. And then—nothing. No measurable ROI, no path to production, just another initiative quietly shelved while leadership wonders where the budget went.

If this sounds familiar, you're not alone. We're watching billions of dollars evaporate in failed AI projects, and the problem isn't the technology.

It’s in the delivery.

The scale of AI project failure is staggering

The numbers are hard to ignore.

A report from MIT Media Lab's Project NANDA found that despite $30-40 billion in enterprise spending on generative AI, 95% of organizations are seeing no business return. The study, which analyzed over 300 publicly disclosed AI initiatives and conducted interviews with executives across industries, revealed what researchers call the "GenAI Divide"—a stark gap between high adoption rates and actual business transformation.

Only 5% of custom enterprise AI tools reach production. The rest stall in pilot purgatory, delivering impressive demos but zero measurable impact on profit and loss.

The trend is accelerating in the wrong direction. According to S&P Global Market Intelligence's Voice of the Enterprise survey of over 1,000 IT professionals across North America and Europe, the percentage of companies abandoning the majority of their AI initiatives before they reach production surged from 17% to 42% year over year. On average, organizations are scrapping 46% of projects between proof of concept and broad adoption.

And it's not just generative AI facing these challenges. Gartner predicts that over 40% of agentic AI projects will be canceled by the end of 2027, due to escalating costs, unclear business value, or inadequate risk controls. The firm found that most agentic AI projects right now are early-stage experiments or proof of concepts that are mostly driven by hype and are often misapplied.

We're looking at hundreds of billions in wasted investment. The question isn't whether AI works—it clearly does for the companies getting it right. The question is why traditional delivery approaches keep breaking down.

Three big reasons traditional delivery models fail AI projects

The data points to a consistent set of failure patterns. Understanding them is the first step toward avoiding them.

1. A scalability gap: misalignment of business goals and KPIs

The first and most common culprit is chasing technology without business alignment.

We've seen this pattern repeatedly: organizations asking "Can we build an AI product?" instead of "What specific problem are we solving, and what KPI will move if we solve it?" The result is technically impressive demos that never translate to business impact.

2. A discovery gap: no overarching strategy

The second failure pattern is launching initiatives without a comprehensive strategy. Too many organizations skip the discovery phase entirely. They're so eager to demonstrate AI progress that they start coding before they've mapped the technology landscape, assessed data readiness, or defined success metrics.

3. A data hygiene debt: fragmented silos

The third pattern is deploying AI on top of a data infrastructure that was never built for it.

According to Informatica's survey, 43% say that data quality, completeness, and readiness are among the biggest obstacles preventing GenAI initiatives from reaching the finish line.

You can have the most sophisticated model in the world, but if it's pulling from fragmented data silos with inconsistent formats and governance gaps, the outputs will be unreliable at best and dangerous at worst.

These three patterns share a common thread: they treat AI as something you bolt onto existing operations rather than something that requires rethinking how work gets done.

Why standard staff augmentation models won’t solve everything.

When companies struggle to scale AI initiatives, the instinct is predictable: add more developers. Assemble an AI team. Hire specialists. The logic seems sound, as more hands should mean faster progress.

But scaling AI isn't an augmentation problem. It's an integration problem.

Traditional staff augmentation works well for many software projects. You identify a skill gap, bring in external talent, and they contribute to an existing codebase with established patterns and workflows. The work is often discrete and measurable. A developer can pick up tickets, attend standups, and deliver features without needing deep context on the business strategy.

AI projects operate differently. They require tight alignment between technical execution and business objectives from day one. A machine learning engineer who doesn't understand why a model is being built—what KPI it's meant to move, what business process it should improve—will optimize for the wrong outcomes. They'll build technically impressive systems that never make it past the demo stage.

The problem compounds at scale. More people without a unified system means slower delivery, not faster. Communication overhead multiplies. Alignment fractures. The team becomes a collection of talented individuals working on disconnected pieces rather than an integrated engine driving toward measurable outcomes.

This is the pattern we see repeatedly: distributed teams end up as add-ons rather than integrated parts of the operational engine. They're handed tasks without context and expected to execute without understanding the "what, how, and why" behind the work. By the time organizations realize what's happening, they're untangling a web of fractured processes and disconnected efforts.

The enterprises that break through are the ones that stop treating AI delivery as a headcount problem and start building systems—delivery engines that turn talent, technology, and strategy into repeatable, measurable velocity.

The power of velocity-as-a-service (Vaas) for smarter project delivery

If 95% of AI pilots fail to deliver measurable business impact, what are the 5% doing differently?

McKinsey found that high performers—organizations reporting significant financial returns from AI—stand out for thinking beyond incremental efficiency gains. They treat AI as a catalyst to transform their organizations, redesigning workflows and accelerating innovation. In fact, high performers are three times more likely to redesign workflows rather than bolt AI onto what already exists.

This is the fundamental shift that separates success from failure. Successful organizations don't start with the technology and ask how to use it. They start with the business outcome and work backward to the solution—which sometimes isn't even AI at all.

This is where an outcome-based approach like Velocity-as-a-Service becomes essential. Rather than asking "how many developers do you need?" it begins with "what business outcome are you trying to achieve?" The difference sounds subtle, but it changes everything downstream, from how teams are structured to how success gets measured.

In practice, this means pushing back when the flashy solution isn't the right one. Sometimes the answer isn't a fully-fledged agentic AI system—it's a simpler workflow engine that solves the problem faster and cheaper. The goal is finding the right solution, not the most impressive one.

Successful projects also operate with what we call an "operator mindset." Teams function like owners, not contractors. They're not just executing tickets; they're developing roadmaps, understanding the industry, assessing existing data and information infrastructure, and building realistic paths to deliver business value. This level of integration requires more than staffing. It requires a unified system that aligns talent, technology, and strategy from day one.

How to stop your AI projects from failing with VaaS

AI projects don't fail because the technology doesn't work. They fail because organizations approach them with the wrong delivery model—treating AI as an augmentation problem when it's fundamentally an integration challenge.

The companies in the 5% that succeed share common characteristics. They start with business outcomes, not technology experiments. They invest heavily in discovery before writing a single line of code. They redesign workflows rather than bolting AI onto broken processes. And they build unified systems that align talent, technology, and strategy into a single delivery engine.

And the competitive window is narrowing. The companies that master velocity now are building capabilities that compound over time. Those that wait will find themselves playing catch-up in a game that's already been decided.

The question isn't whether AI will reshape your industry. It's whether you'll be the one doing the reshaping—or the one being left behind. The difference comes down to delivery: not just having talented people, but having the systems that turn talent into measurable outcomes.

Traditional approaches won't get you there. Velocity-as-a-Service will.