Use Case

How CodeRoad accelerated multi-day processing with Databricks intelligence platform

Read now

The execution layer your AI roadmap is missing

We engineer, deploy, and operationalize production-grade AI systems — with the MLOps governance, data infrastructure, and agentic delivery workflows that take AI from proof-of-concept to measurable business outcomes. Accelerate your AI roadmap and deliver faster, smarter and leaner outcomes with Velocity-as-a-Service.

For enterprise organizations struggling to see AI investments produce measurable returns there is a lack of infrastructure to deliver. This gap two distinct, repeatable failure points—both direct hits to ROI.

1. The Production Gap (POC → Reality)

AI pilots prove the concept, but they’re not built to survive production. There’s no deployment pipeline, no model governance, no real-time data infrastructure to support live environments. The result: approved initiatives that stall after the demo. Investment goes into models that never generate returns because the path to production was never engineered.

Why it kills ROI: capital is spent validating ideas that never become operational systems.

2. The Capacity Gap (Roadmap → Execution)

Even when the production path exists, AI initiatives compete with everything else the engineering team owns. Incidents, legacy support, and product priorities constantly interrupt progress. Without protected delivery capacity, momentum resets every sprint.

Why it kills ROI: timelines stretch indefinitely, and value realization keeps getting deferred.

Until both gaps are solved production readiness and dedicated execution capacity are stalled; AI remains a roadmap item, and not a revenue driver.

AI-first software delivery is not a technology choice — it is an engineering methodology. It means building the delivery system around the requirements of AI workloads from the start: governed data pipelines, automated model deployment, drift monitoring, and agentic workflows designed to operate reliably in production environments rather than just in demonstrations. When AI delivery is treated as an engineering discipline rather than a research activity, three outcomes change immediately:

The average enterprise AI pilot takes 6–12 months to reach production when it reaches production at all. AI-first delivery compresses this timeline by designing the production path from day one — so the model, the infrastructure, and the governance framework are co-developed rather than assembled sequentially after the model is considered "done." The pilot is designed to become the production system, not replaced by one.

A model that degrades silently after deployment isn't a production asset — it's a liability. AI-first delivery builds monitoring, drift detection, and automated retraining protocols into the deployment architecture from the start — so AI systems stay accurate, governed, and auditable over time rather than becoming the source of the next production incident six months after launch.

The most expensive AI investment most organizations make is the first one — because the infrastructure to support it doesn't exist yet. AI-first delivery produces reusable components: governed data pipelines, deployment frameworks, monitoring infrastructure, and agentic workflow patterns that every subsequent AI initiative can build on. The second initiative is faster than the first. The third is faster than the second. The delivery capability compounds.

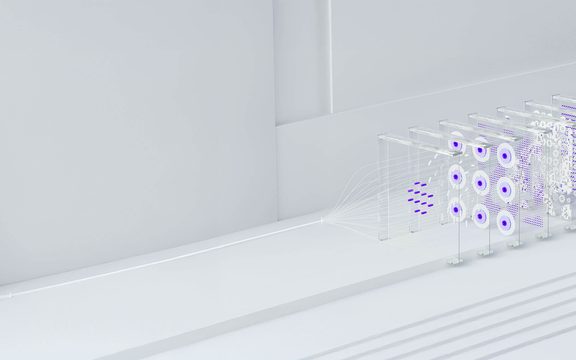

CodeRoad approaches AI delivery as a systems engineering problem. The difference between an AI initiative that ships and one that stalls is almost always the delivery system surrounding the model, not the model itself. Our AI-first software delivery methodology integrates four engineering disciplines that most AI programs treat as separate work flow and that separation is precisely where initiatives die.

Discover where your AI stands, then fix it the gaps. Get your free maturity map

Production AI systems require production-grade data infrastructure. We engineer the real-time ingestion pipelines, data governance frameworks, and feature stores that give AI models the reliable, consistent, low-latency inputs they need to perform in production. The data layer is not a prerequisite we assume someone else has handled — it is the first engineering workstream we assess and, where needed, build. An AI initiative sitting on fragmented, batch-processed, or ungoverned data is not a production AI initiative — it is a pilot waiting to fail.

We build the CI/CD infrastructure for AI that most organizations don't have: automated model deployment pipelines, version control, A/B testing frameworks, performance monitoring, and drift detection. This is the engineering layer that makes production AI governable — so models can be updated, audited, retrained, and rolled back with the same discipline applied to software releases in regulated environments.

The AI roadmap items that lose momentum to operational priorities don't lose momentum because the team isn't capable — they lose momentum because the team isn't structurally protected from the reprioritization that operational environments create constantly. CodeRoad provides dedicated AI engineering capacity with a single mandate: move the AI initiative forward. Our nearshore pods are not part of the internal team's sprint — they are the AI delivery team, operating alongside the internal team without competing for the same bandwidth.

We design and implement autonomous AI agents capable of executing multi-step operational processes with human-in-the-loop governance at the decision points that require it. The result is AI that doesn't just produce outputs for humans to act on — it takes actions within governed parameters, escalates when it encounters decisions outside those parameters, and learns from the outcomes of both.

What distinguishes CodeRoad as an AI delivery partner is our accountability. Our outcome-based delivery system is designed so our engineers co-own your roadmap and ensure measurable outcomes are delivered.

The same team that defines the roadmap builds it, turning strategy into an engineering work flow from day one

Pods own delivery end-to-end: code, pipelines, and production systems—not just recommendations

When you partner with CodeRoad success isn’t a roadmap delivered, it’s AI systems live, performing, and driving results

AI is the layer you leverage. Velocity-as-a-Service is how it reaches production.

What you build underneath depends on the execution challenge you’re solving. Each of these capabilities carry its own engineering discipline, delivery model, and production requirements. The AI Delivery layer ensures they ship.

30 minutes to unlock the right AI capability for you. Book Intro Now.

When the goal is AI that executes work, not just assists with it. Agentic systems observe, decide, and act across multi-step workflows, autonomously, with human-in-the-loop governance at every decision point that requires it. This is the capability that moves AI from a productivity tool to an operating system for your business.

When off-the-shelf AI won't solve the problem. We engineer custom AI and ML systems built around your specific data, your business logic, and your competitive requirements, not a generic wrapper around a public model.

When the goal is AI that generates content, decisions, or outputs grounded in your proprietary data. We build secure RAG architectures and LLM integrations that ensure your AI acts as a subject matter expert on your business; not the public internet.

When the goal is a conversational AI system that integrates into your existing stack and performs reliably in production. We don't build chatbots as experiments — we deliver production-ready conversational AI with NLP, CRM integration, and the governance infrastructure that makes enterprise deployment viable.

AI project delivery fails when it is governed by the same methodology used for standard software delivery. The model-building, data engineering, governance design, and infrastructure provisioning that production AI requires don't fit neatly into a two-week sprint cadence designed for feature development. The methodology has to be designed for the workload. CodeRoad's AI project delivery methodology is built around three governing principles that apply across every engagement regardless of use case, industry, or platform complexity.

AI systems that operate without human oversight at critical decision points create regulatory exposure, produce unpredictable outcomes, and erode the organizational trust that AI adoption depends on. Our AI orchestration and delivery model assigns CodeRoad Agent Pilots — senior engineers who act as AI orchestration leads — to validate, harden, and govern AI-generated outputs at every decision point that requires human judgment. One agent performs the task. A second audits the result. The Agent Pilot provides final approval on high-stakes decisions. This is not a bottleneck — it is the governance layer that makes enterprise AI deployable in regulated and high-stakes environments.

Every AI initiative CodeRoad delivers is designed for the production environment it will operate in — not for the development environment where it was built. Security requirements, compliance frameworks, integration architecture, performance constraints, and data governance obligations are engineering inputs from sprint one, not requirements added to the delivery backlog after the model is complete. The result is a system that moves from development to production without the weeks of remediation work that typically separates a working prototype from a deployable system.

The engagement isn't complete when the model is deployed. It's complete when the model is performing in production against the business outcomes it was designed to produce — reduced fraud rates, faster processing cycles, improved prediction accuracy, measurable cost reduction. Our pods co-own the outcome, not just the sprint. This accountability structure is what distinguishes an AI delivery partner from an AI advisory firm — and it's what makes the difference between AI that hits the P&L and AI that hits the roadmap.

AI orchestration and delivery requires a structured execution path that takes AI from diagnostic to production to scaled deployment, with governance and accountability at every stage. This is not a linear waterfall process or a research sprint — it is a continuous delivery loop designed to move fast and govern tightly simultaneously.

30 minutes to move from pilot to production. Book Assessment.

Most organizations are paralyzed by choice — 50 potential AI use cases and no clear execution path for any of them. We begin every AI delivery engagement with a structured diagnostic that identifies where the AI initiative is stalled, what the highest-yield intervention is, and what the production path actually requires before any engineering work begins.

The output of Stage 1 is not a strategy deck. It is a technical and operational blueprint that answers three questions: what is the fastest path to a production-ready AI system, what data infrastructure needs to exist to support it, and what governance framework needs to be designed alongside it rather than after it.

Unlike traditional discovery sessions that produce sandbox prototypes, our VaaS Launchpad builds the first production-ready AI component — embedded into the client's existing architecture, governed from day one, and designed to become the foundation for the broader AI deployment rather than a demonstration that gets discarded.

This is where pilots cross to production. The Launchpad is a 30-day execution sprint that delivers a working, data-grounded, governed AI system — not a proof-of-concept that requires another 12 months of engineering work to survive a production environment.

What the Launchpad produces:

Once initial value is proven in production, execution accelerates. AI capabilities expand across workflows, infrastructure, and data systems, establishing a new delivery tempo for sustained ROI. New agents are trained, integrated, and refined using real performance data from the live system. The delivery infrastructure built in Stage 2 becomes the foundation for every subsequent initiative, so the organization is building a compounding AI delivery capability, not re-starting from scratch with each new use case.

AI Delivery & ROI is where pilots stop stalling and AI roadmap items stop losing ground to operational priorities. These specialized execution units built for the gap between proof-of-concept and production impact. When AI pilots stall and roadmap items lose momentum to operational priorities, we don't extend the experiment. We deploy the studios that engineer the infrastructure layer required to make AI hit the P&L.

The driving force behind CodeRoad's agentic AI capabilities. This studio moves teams from proof-of-concept to real-world production impact, engineering the MLOps, integration layer, and agentic workflow infrastructure that most organizations are missing when pilots fail to cross into the P&L. They operate using LangGraph and CrewAI orchestration frameworks, with a mandate to deliver measurable outcomes, not demonstrations.

Production AI needs production-grade data. This studio rebuilds the data pipelines, lakes, and real-time ingestion systems that AI initiatives depend on: replacing batch processes with live data flows and eliminating the fragmentation that causes AI outputs to be inconsistent or untrustworthy at scale.

When stalled AI initiatives require infrastructure, integration, or platform work that sits outside the AI layer itself, this studio provides the end-to-end execution capacity to complete it. Full-stack ownership across frontend, backend, and DevOps ensures that nothing outside the model blocks delivery.

Production AI systems require a technology stack designed for the performance, governance, and reliability requirements of enterprise environments — not just the modeling requirements of research environments. Our nearshore engineering pods build with the AI delivery stack that takes initiatives from notebook to production.

MLflow, Weights & Biases, automated deployment pipelines — model versioning, performance monitoring, drift detection, and retraining protocols. For organizations where AI systems need to stay accurate and auditable after deployment, not just at launch.

Snowflake, Databricks, real-time ingestion via Apache Kafka and dbt — the data stack that provides AI models with reliable, governed, low-latency inputs at production scale. For organizations where the data layer is the actual blocker between pilot and production.

LangChain, LlamaIndex, custom RAG architectures, multi-agent orchestration — the agentic workflow layer that moves AI from outputs to actions. For organizations building AI systems that need to execute multi-step operational processes with human-in-the-loop governance at critical decision points.

AWS SageMaker, Azure ML, Google Vertex AI — cloud-native model deployment environments with CI/CD pipelines, containerized inference, and auto-scaling infrastructure. For organizations that need AI systems to perform reliably under variable production load without manual infrastructure management.

SOC 2, HIPAA, GDPR-aligned AI architecture — role-based access controls, data masking, explainability frameworks, and audit trail generation built into the deployment infrastructure. For organizations in regulated industries where AI governance isn't optional.

Pinecone, Weaviate, pgvector — the retrieval infrastructure that grounds AI models in proprietary organizational data rather than public training sets. For organizations building RAG architectures that need to ensure AI outputs are accurate, current, and organizationally specific.

AI consulting produces recommendations, roadmaps, and strategy documents. AI delivery produces working, production-grade AI systems. CodeRoad does both — but the engagement doesn't end at the roadmap. The same team that maps the initiative builds it, deploys it, and governs it in production. The handoff gap where most AI programs die doesn't exist in a CodeRoad engagement because there is no handoff.

Almost always one of three things: the data infrastructure feeding the model isn't production-ready, there is no governed deployment path from development to production, or the engineering team responsible for the initiative is also responsible for operational priorities that keep reclaiming their bandwidth. Our AI Maturity Mapidentifies which of these is the primary blocker in 10 minutes.

Dedicated delivery capacity that is structurally protected from operational reprioritization. Our nearshore AI delivery pods operate alongside the internal team — they are not part of the internal sprint. When a production incident pulls the internal team into firefighting, the AI delivery pod continues moving the initiative forward. The momentum is maintained because the capacity is protected.

We build monitoring, drift detection, and automated retraining protocols into the deployment architecture from day one — not as a post-launch addition. Every AI system CodeRoad deploys includes performance dashboards, automated alerts for accuracy degradation, and a defined retraining trigger so the system stays current without requiring manual intervention to identify when it has drifted.

No. We use private LLM instances and local embeddings as part of our Nearshore 3.0 commitment. Proprietary organizational data is never exposed to public training sets — it remains within governed infrastructure aligned to the organization's compliance requirements.

Through our 30-day VaaS Launchpad, we deliver a functional, data-grounded, governed AI component within the first sprint cycle. This is not a sandbox prototype, it is a production-deployed system embedded into the existing architecture with monitoring and governance in place.

Execution ownership. The roadmap and the delivery are a single continuous work flow rather than sequential engagements. Architecture decisions are made by the engineers who implement them. Governance frameworks are designed by the pods who operate within them. And success is measured in production outcomes — reduced processing time, improved accuracy rates, measurable cost reduction — not in deliverable milestones.

The gap between proof-of-concept and production is an execution infrastructure problem. CodeRoad engineers the data layer, the MLOps governance, and the delivery capacity that takes AI initiatives from stalled to shipped, to keep them performing after they land.